TABLE OF CONTENTS

Ever went to go buy a fancy new graphics card and ran into technobabble like this?

Cuda Cores? Stream Processors? What even are they?

If you’ve spent any amount of time looking at graphic card specifications, it’s likely that you’ve come across the words “CUDA cores” or “Stream Processors” before.

These two terms and technologies are closely related to each other, but they’re not interchangeable, and knowing the difference between them can help you make an informed decision when buying a graphics card.

In this article, I’m going to go into detail about the nuances of both—and some other “cores” you might find.

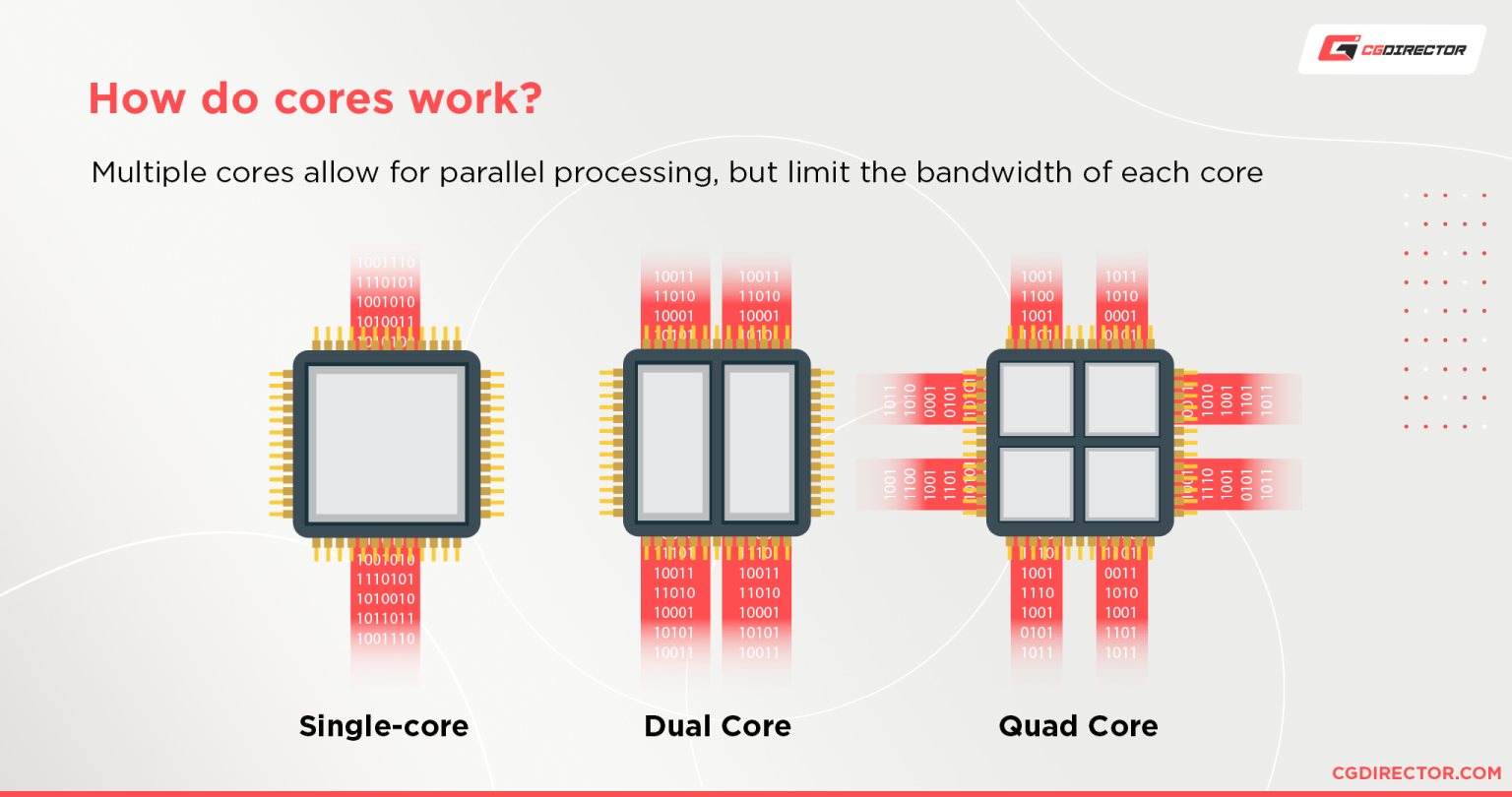

What are Cores Anyway?

Cores can be thought of as the heart of any CPU or GPU.

Like the human heart, a core can only do one thing at a time, but it can do it very quickly and efficiently.

The number of cores in a CPU, for example, determines its general processing power.

A CPU with a single core is a bit like a person who can either breathe or talk, but not both at the same time.

To continue that analogy, when a single-core CPU needs to breathe, it stops talking. And when it needs to talk, it stops breathing. This is in contrast to multi-core CPUs which can do all of that at once.

Having several cores provides your computer with the ability to do a special type of multitasking called parallel processing, which allows your computer to more efficiently use your processor.

A single-core CPU is fast, but it’s limited. A multi-core CPU can be slower per task, but it can do many tasks at the same time.

Now, instead of a CPU with a handful of cores, imagine a processor with thousands of cores all working in parallel for very specific tasks as opposed to the more generalized tasks that a typical CPU faces.

That is what GPUs have. And that is why GPUs are so much slower than CPUs for general-purpose serial computing, but so much faster for parallel computing.

The cores on a GPU are usually referred to as “CUDA Cores” or “Stream Processors.”

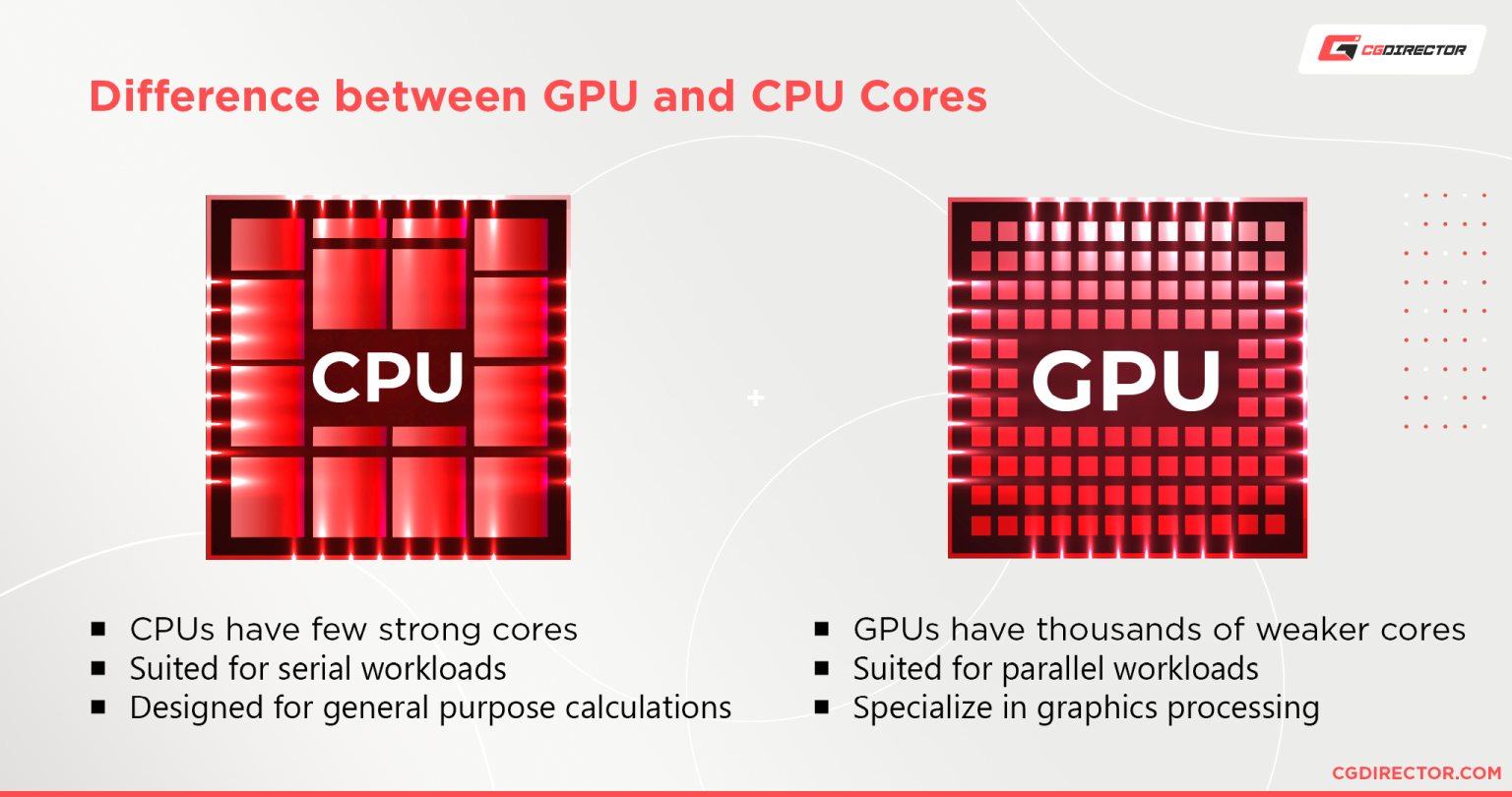

What are the Differences Between CPU Cores and GPU Cores?

There are many similarities between CPU cores and GPU cores, but they also have many differences.

CPU cores are designed to run multiple instructions at once. They’re designed for general-purpose calculations and have a wide variety of uses.

GPU cores are designed for a single purpose: graphics processing. They’re specialized and very efficient at their job.

CPUs use a small handful of very powerful cores, while GPUs are built with a large number of comparably less powerful cores.

A GPU is great at doing parallel tasks, like quickly working out what thousands of pixels need to look like in fractions of a second.

The difference between CPUs and GPUs is that each is purpose-built to do different types of processing.

What are CUDA Cores and what are they used for?

“CUDA” is a proprietary technology developed by NVIDIA and stands for Compute Unified Device Architecture.

CUDA Cores are used for a lot of things, but the main thing they’re used for is to enable efficient parallel computing.

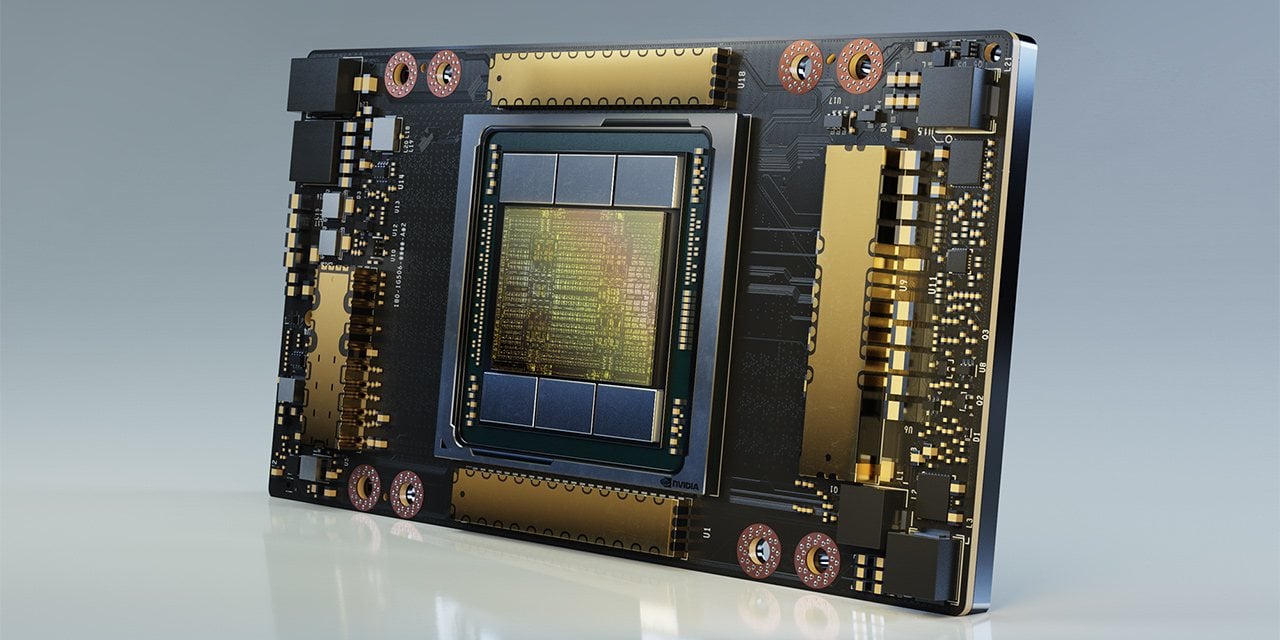

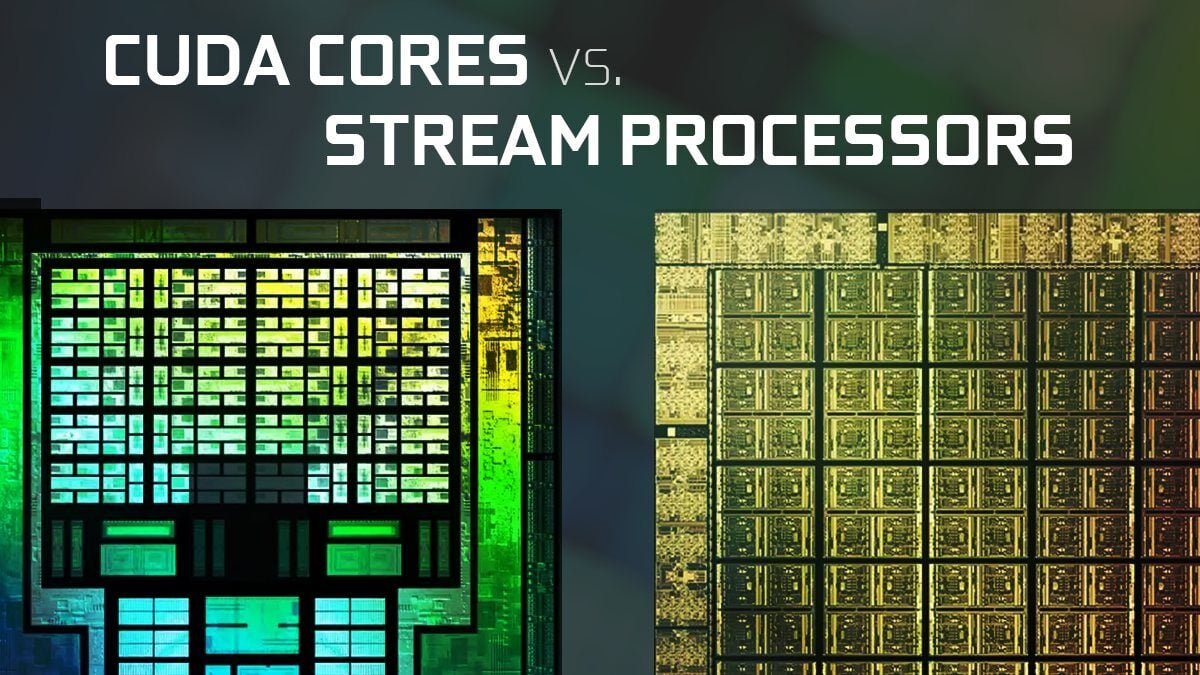

Image-Source: Nvidia

A single CUDA core is similar to a CPU core, with the primary difference being that it is less capable but implemented in much greater numbers. Which again allows for great parallel computing.

A typical CPU contains anywhere from 2 to 16 cores, but the number of CUDA cores in even the lowliest modern NVIDIA GPUs is in the hundreds.

Meanwhile, high-end cards now have thousands of them.

But more than being just a set of cores, CUDA is an interface to access those cores and communicate with the rest of your system.

The cores that execute those instructions are called CUDA cores.

What are Stream Processors and what are they used for?

NVIDIA has their CUDA cores, but AMD, their chief competitor, also has a rival technology called “Stream Processors“.

Now, these two technologies and the respective companies’ GPU architectures are different.

However, at the end of the day, they are fundamentally the same thing when it comes to their core functions and what they are used for.

CUDA Cores vs Stream Processors

Generally, NVIDIA’s CUDA Cores are known to be more stable and better optimized—as NVIDIA’s hardware usually is compared to AMD sadly.

But there are no noticeable performance or graphics quality differences in real-world tests between the two architectures.

Because Nvidia has been the #1 GPU manufacturer for a long time, there is just much wider support for CUDA cores compared to AMD’s Stream Processors.

After all, the CUDA Architecture is proprietary, and developing Software for it is a cumbersome process. It’s understandable, that Render Engine Developers, Game Engine Developers, or Game Developers prefer developing and supporting GPU Hardware that is more widely used.

But in its essence, both CUDA Cores and Stream Processors are similarly capable, if the software support is on the same level. So you don’t need to worry about what is “better” when it comes to GPU cores as they’re both pretty much the same.

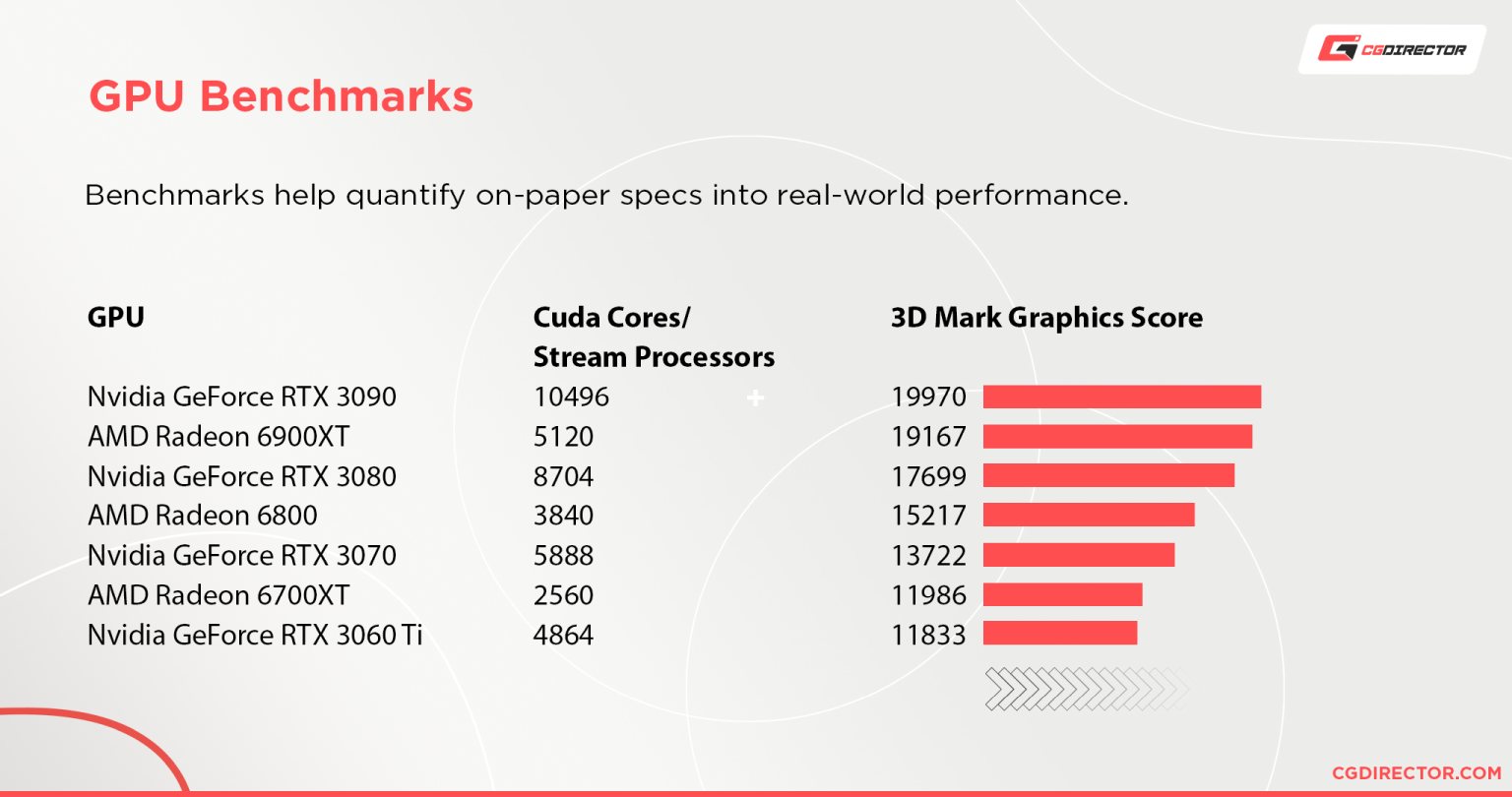

How to Compare GPUs

It’s very hard to compare GPUs through their specifications alone.

NVIDIA’s and AMD’s definitions of cores themselves are different and their core architectures are wildly different as well.

You can’t just go with an NVIDIA GPU because it has a thousand more CUDA cores than a comparable AMD card, and you can’t just go with an AMD GPU for the same reasons.

The best and only real way to compare the performance of GPUs is through real-world benchmarks results.

We put together two comparison tables for Nvidia’s GPUs and AMD’s GPUs to make this process easier for you.

What are NVIDIA Tensor Cores?

First introduced in NVIDIA’s Volta architecture, Tensor Cores are a type of core designed to make artificial intelligence and deep learning more accessible and more powerful.

Since the inception of CUDA GPUs, the architecture has been designed with one important goal in mind: to make the creation of parallel, highly efficient programs easier. And for the last decade, it’s done a great job of that.

But, thanks to the success and innovations brought on by deep learning, an AI-based technology that uses neural networks to learn to perform tasks without being explicitly programmed, NVIDIA needed to make some major changes to better support these cutting-edge technologies.

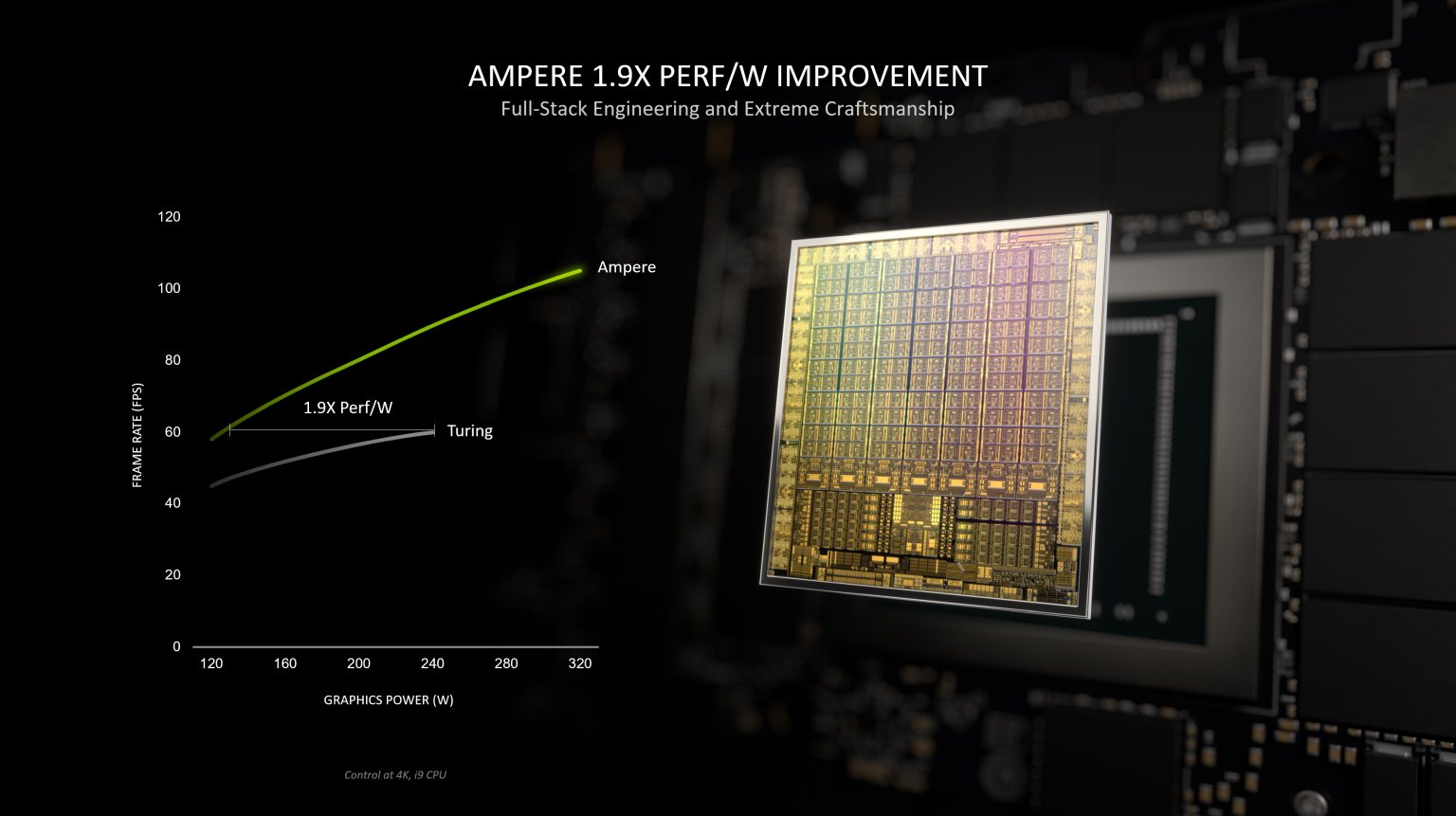

NVIDIA’s new architecture, Ampere, has brought even more major changes with its 3rd generation Tensor Cores that will make it much easier and faster to train deep learning models.

GA100 GPU, Image-Source: Nvidia

These cores are designed with deep learning in mind, and they are much more efficient than the old Tensor Cores that are found in the Volta and Turing architectures before Ampere.

The Tensor Cores are designed to handle matrix multiplication and convolution operations, which are the bread and butter of deep learning algorithms.

NVIDIA’s 3rd generation Tensor Cores can perform an astonishing 20x faster than the older cores.

That is a lot of speed, and it’s hoped that it will be able to help improve the performance of deep learning algorithms by a lot.

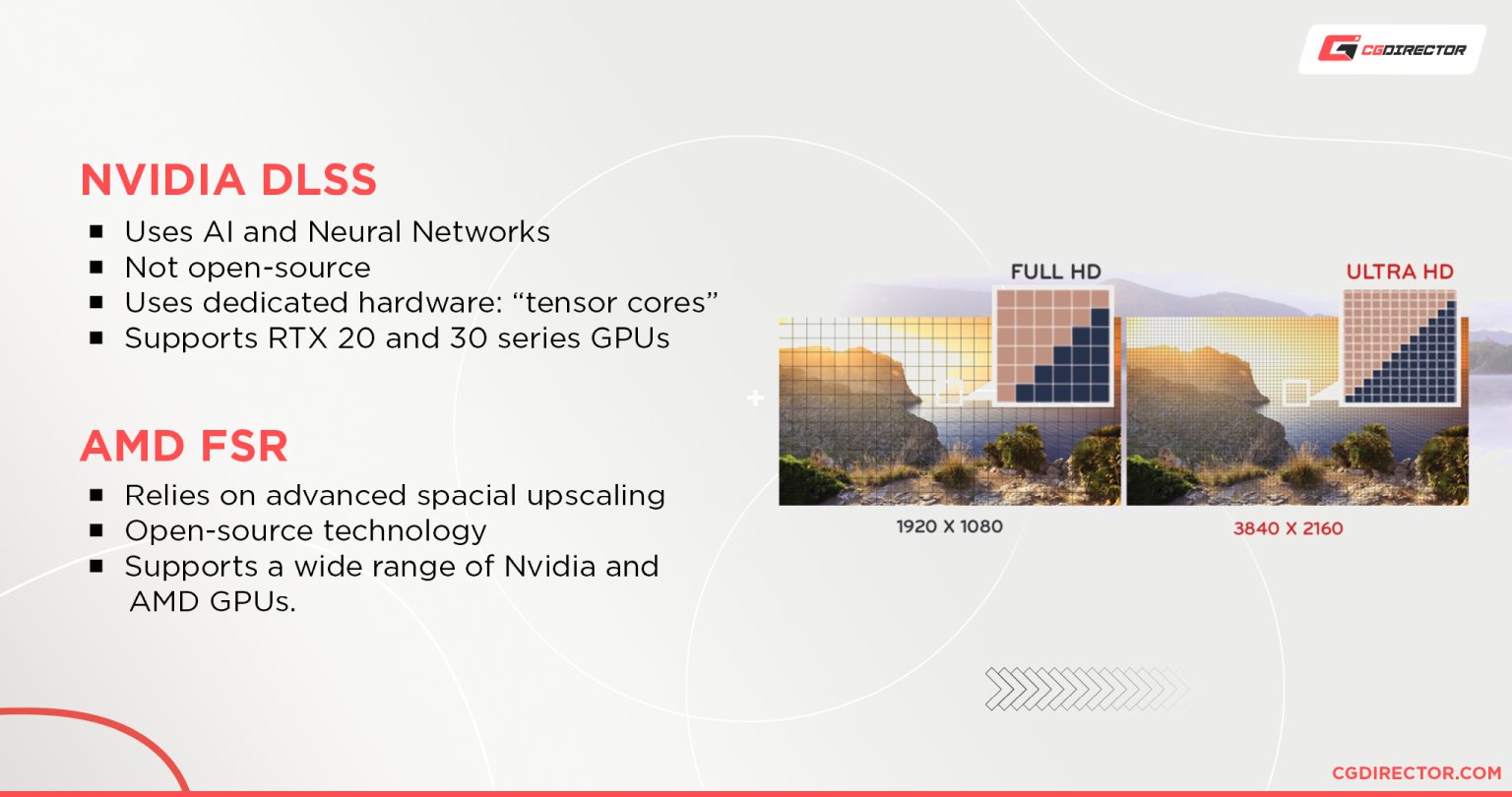

One of the technologies that actually uses deep neural network learning is NVIDIA’s own DLSS technology—and it’s something that the average Joe or Jill can actually use now!

It is a technology that leverages deep learning and artificial intelligence to enhance the graphics and performance of games (currently) without compromising visual quality.

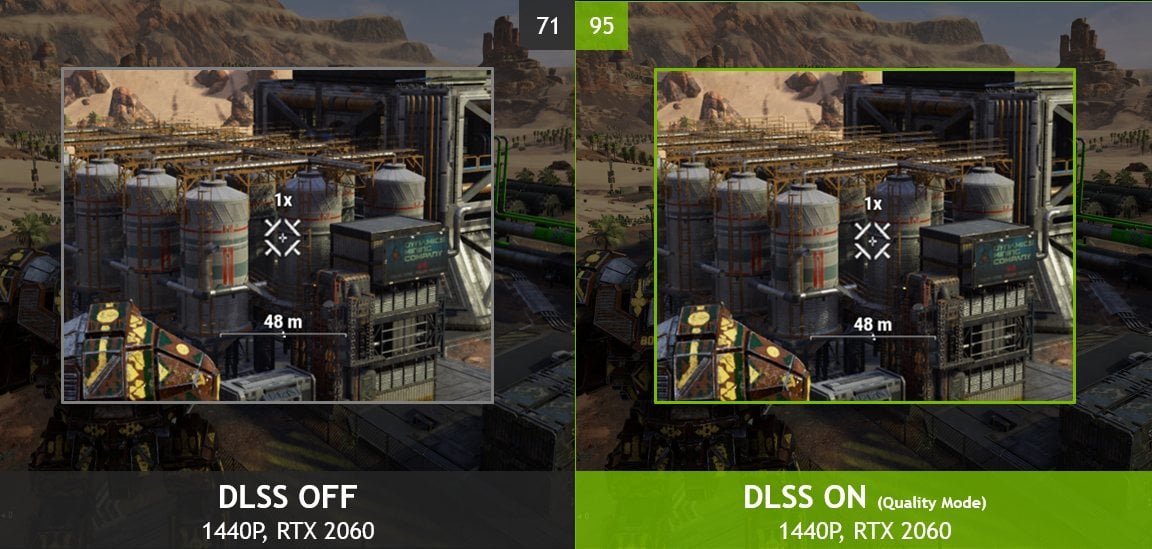

Image Credit: Wolfenstein: Youngblood – NVIDIA

DLSS stands for Deep Learning Super-Sampling.

When DLSS is enabled in supported games, it uses a deep neural network to analyze and improve upon the standard image-enhancement techniques employed by traditional anti-aliasing.

The enhanced images produced by DLSS are usually comparable to 4K images but rendered at significantly lower resolutions. Giving major performance improvements.

Image Credit: MechWarrior 5 – NVIDIA

DLSS works on graphics cards starting from the Turing-architecture GPUs, but it entered into prime time with the Ampere generation.

Because DLSS is a new technology, it is not available in all games, and it’s not guaranteed to work in every situation.

But NVIDIA is continuously working with developers to improve its performance.

What are NVIDIA RT Cores?

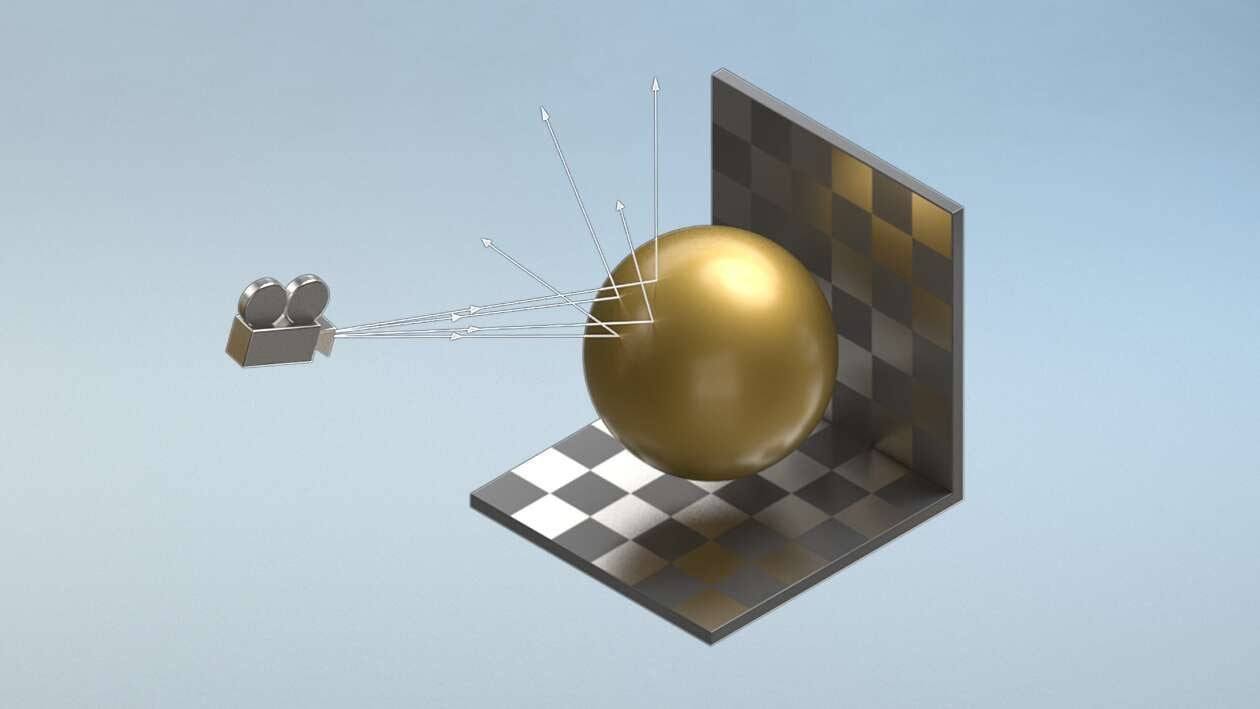

NVIDIA has also introduced another new type of core: the RT Cores—Ray Tracing Cores.

These cores are designed to handle real-time video and audio processing at the same time as deep learning.

These cores help with ray tracing, a rendering technique that aims to mimic the way light reflects and refracts as it passes through different materials.

Ray tracing has been used in professional 3D rendering for years, but it has only recently become practical for real-time rendering. NVIDIA’s RT cores are an important part of this development.

Raytracing on RTX GPUs, Image-Source: Nvidia

NVIDIA’s RT cores are designed to accelerate ray tracing calculations and have been specially designed for these tasks.

They can be used to speed up the rendering of games and other 3D graphics by many orders of magnitudes.

The 2nd generation of RT Cores introduced with the Ampere architecture is also much more efficient and powerful than the older cores.

Generational performance uplift – Nvidia

NVIDIA’s new architecture is a huge step forward in the world of deep learning and artificial intelligence-enhanced media.

The new Tensor Cores and RT Cores make it possible to create some truly amazing applications. Who knows what the future holds for these new technologies?

Fully path-traced real-time renders might not be too far off in the future if we keep innovating at this rate.

What are AMD Ray Accelerators?

AMD Ray Accelerators are AMD’s answer to NVIDIA’s RT Cores.

AMD joined the ray-tracing competition with their RX 6000 series and with that, they have also introduced a few important features to the RDNA 2 architectural design that helps to compete with NVIDIA’s RT Cores.

These “Ray Accelerators” are supposed to increase the efficiency of the standard GPU compute units in the computational workloads related to ray tracing.

The mechanism behind the functioning of Ray Accelerators is still relatively vague however and it’s still in its infancy as AMD was somewhat slow to adapt to the real-time ray tracing revolution.

As of right now, AMD’s Ray Accelerators don’t exactly match up to NVIDIA’s relatively more mature RT Cores on performance.

And AMD also doesn’t have a viable competitor to NVIDIA’s DLSS technology. However, a solution similar to it is actively being worked on.

So there’s a definite chance that the tables might turn with AMD’s upcoming RDNA 3 architecture.

In Summary

Hopefully, that cleared things up for you.

If you’re still feeling lost, however, here are the main points you should take away from this.

Stream Processors and CUDA Cores are branded names for the same thing: a parallel processor and the set of rules for its operation.

In practice, the two are fundamentally different because AMD and NVIDIA each use their own unique architecture.

But there is no great real-world performance difference between the two.

Trying to directly compare Stream Processors and CUDA Cores to each other is like trying to measure your car’s mileage by looking at the size of your fuel tank.

It makes much more sense to just take the car for a drive.

Looking at hard data will always be far more valuable than making comparisons between CUDA Cores and Stream Processors or any other specification on its own.

FAQ

- How many cores do I need in a GPU?

- More the merrier. You can’t have enough cores on a GPU. But higher core counts come with higher costs, so you have to balance that out.

- Do more cores equal better performance?

- Yes. Pretty much. It’s certainly not the only thing that determines the performance of a GPU, but it does play a big part in it, so you can generally assume that higher core counts (whether it be CUDA, Stream Processors, or otherwise) will amount to better performance.

- How do cores affect 3D modeling performance?

- Modeling specifically? Not that much actually. Modeling is usually a CPU-bound activity. If you’re running into slowdowns when it comes to modeling, it’s usually because you’re either running out of RAM or your CPU is having a hard time keeping up with all those polygons.

- I’d recommend taking a look at our article about creating a computer for 3D modeling to learn more.

- Are CUDA Cores better than Stream Processors?

- They’re both very similar in their function and performance.

- The only upper hand that CUDA might have over Stream Processors is that it’s generally known to have better software support.

- But overall, there’s no great difference between the two.

- Do AMD cards have CUDA Cores?

- No. CUDA Cores are a proprietary technology developed by NVIDIA that’s only available in NVIDIA GPUs.

We hope that explains exactly what all those cores on GPUs are! Have any other questions about them? Let us know in the comments or our forum!

![Guide to Undervolting your GPU [Step by Step] Guide to Undervolting your GPU [Step by Step]](https://www.cgdirector.com/wp-content/uploads/media/2024/04/Guide-to-Undervolting-your-GPU-Twitter-594x335.jpg)

![Are Intel ARC GPUs Any Good? [2024 Update] Are Intel ARC GPUs Any Good? [2024 Update]](https://www.cgdirector.com/wp-content/uploads/media/2024/02/Are-Intel-ARC-GPUs-Any-Good-Twitter-594x335.jpg)

![Graphics Card (GPU) Not Detected [How to Fix] Graphics Card (GPU) Not Detected [How to Fix]](https://www.cgdirector.com/wp-content/uploads/media/2024/01/Graphics-Card-GPU-Not-Detected-CGDIRECTOR-Twitter-594x335.jpg)

6 Comments

19 July, 2023

Thank you! Really helpful !

19 October, 2022

Dear Alex. I have a z170mobo with an i5 6600K and 32g memory. I work on video editing and rendering. Do you happen to know what card I should consider in order to HELP my cpu on video preview and rendering on an older Adobe Premiere version or other video editing software? Thanks a lot.

7 November, 2022

With a PCIe 3 x16 Slot available you can add in pretty much any modern card without any performance implications. Of course your CPU might bottleneck a GPU that is too high-end.

I’d say a GTX 1660 Super or a RTX 3060 would pair well with your other components if that’s in your wheelhouse budget-wise.

Alex

19 September, 2022

I learned a lot so thank you, now I can make a better decission for buying a new laptop

the one I use now is 5 years old…

19 September, 2022

Hey Tedo,

Glad we could help! Let me know of any questions you might have.

Cheers,

Alex

24 February, 2022

Thank you very much. Cleared up a lot of questions I had reg GPU cores.