TABLE OF CONTENTS

When you have an integrated or dedicated GPU, your operating system will take up as much as half of your RAM and use it as shared GPU memory.

That, to the uninitiated, might seem strange, but there’s actually a very logical explanation behind it all.

Before we delve any deeper into the why and how, let’s first “deconstruct” this topic into four key questions:

- What is shared GPU memory?

- How does it affect your PC?

- Does it affect your rendering, 3D modeling, and gaming performance?

- Can you turn this peculiar feature off and, if so, should you?

Let’s take a closer look!

What is Shared GPU Memory

Let’s start off with the basic definition:

Shared GPU memory is a type of virtual memory that’s typically used when your GPU runs out of dedicated video memory.

Shared GPU memory, therefore, is not the same as dedicated GPU memory. There’s a big difference between these two types of VRAM.

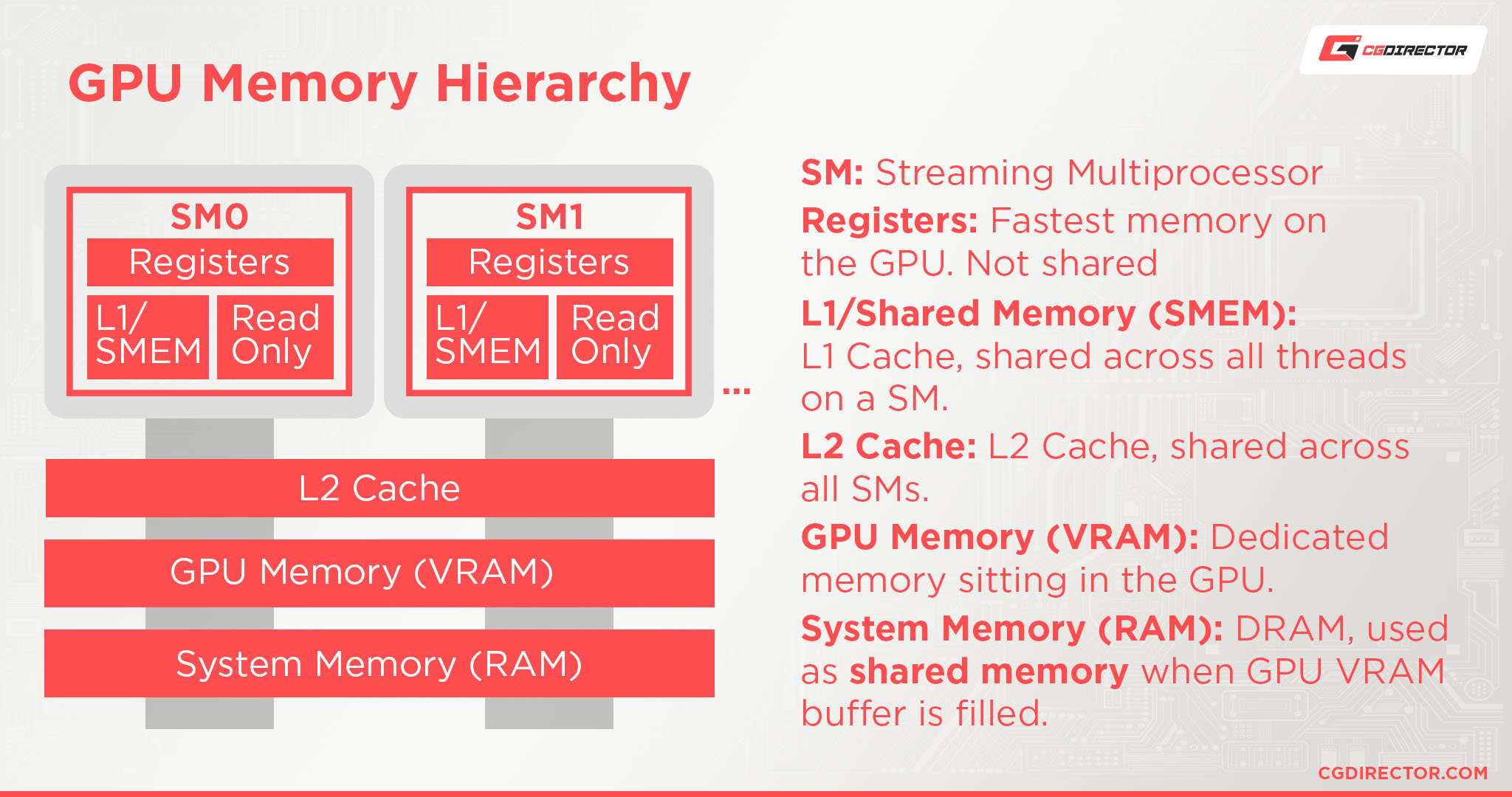

Source: Ashan Priyadarshana and NVIDIA

Why do operating systems use RAM as a source of memory for your GPU when there’s much more of it available on hard disk drives and SSDs?

The reason is rather simple: RAM is much faster than any SSD or HDD out there.

Difference Between Dedicated and Shared GPU Memory

Dedicated and shared GPU memory aren’t the same.

Dedicated GPU memory is the physical memory located on a discrete graphics card itself. Generally, these are high-speed memory modules (GDDR / HBM) placed close to the GPU’s core chip, which is used for rendering software, applications, and games (amongst other things).

Shared GPU memory is “sourced” and taken from your System RAM – it’s not physical, but virtual – basically just an allocation or reserved area on your System RAM; this memory can then be used as VRAM (Video-RAM) once your dedicated GPU memory (if you have any) is full.

If you have an iGPU (a GPU that is integrated inside your CPU), this iGPU does not have any kind of dedicated VRAM of its own. Therefore it’ll have to use your System RAM.

Your system will dedicate up to 50% of your physical RAM to shared GPU memory, regardless if you have an integrated or a dedicated GPU.

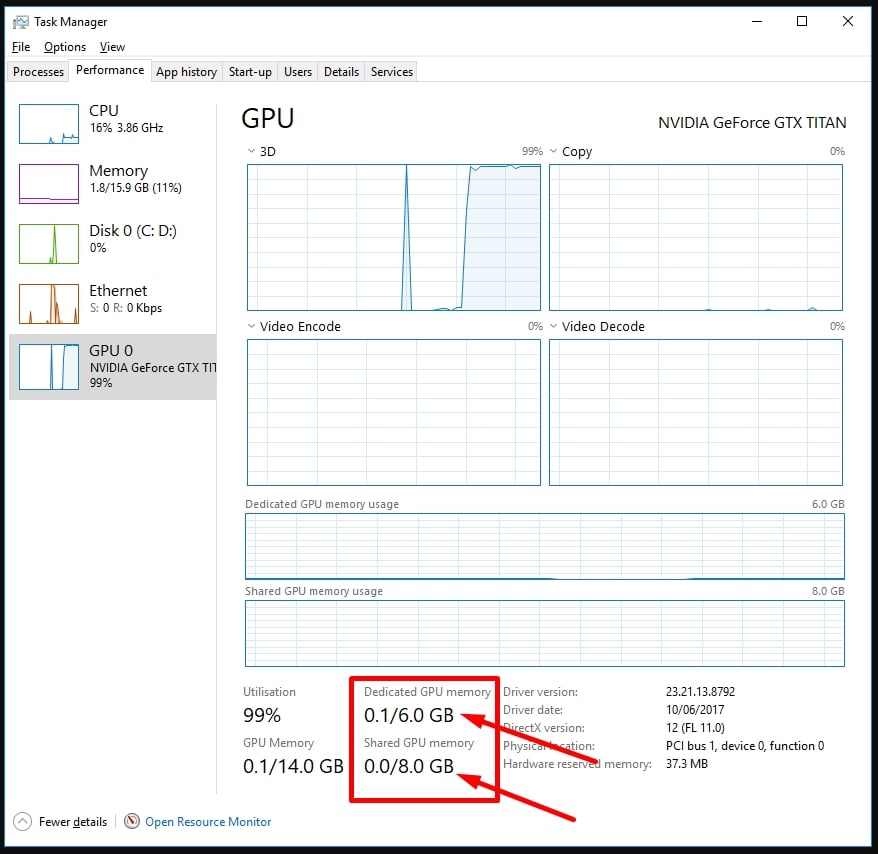

As you can see in the image below, my PC has an Nvidia GTX Titan with 6GB of VRAM (Dedicated GPU Memory), and because I have 16GB of System RAM, 8 of those are allocated to be used for “Shared GPU Memory” (half of my System RAM).

Shared GPU Memory in Windows Taskmanager

Should you decrease or increase Shared GPU Memory?

An important question arises: should you tinker with these settings? Well, it really depends on your setup.

If you have a dedicated GPU, leaving things as they are would probably be for the best. Depending on your particular graphics card and its VRAM capacity, shared GPU memory might not even be used at all!

If push comes to shove and your OS has to resort to this peculiar procedure, it won’t cause any additional performance issues apart from spikes, and frame drops the very moment your GPU’s VRAM buffer is filled.

If you don’t have a dedicated GPU but rather an integrated one (such as the Intel UHD Graphics 730, for instance), tinkering with shared GPU memory settings should still be avoided.

Modern operating systems do a great job of managing and allocating memory, so it’s best to let them do their thing.

Because 50% of your System RAM can already be used by your (i)GPU, chances are slim that your workload will need more VRAM (shared memory) without also needing RAM to function.

If you had a simple application that only used a lot of your GPU’s resources but not your CPU’s, then you might see a performance impact when allocating more shared GPU memory to be used.

Conclusion

Dedicated memory represents the amount of physical VRAM a GPU possesses, whereas shared GPU memory represents a virtual amount taken from your system’s RAM.

Modern operating systems do a great job when it comes to optimizing shared video memory usage.

There’s really no need for you to manually adjust anything, though if your fingers are itching, you can do so by adjusting video memory settings in your BIOS or – in case you have an Intel or AMD APU – by making the desired changes in the Windows Registry Editor.

FAQ

Is shared GPU Memory slower than dedicated GPU Memory (VRAM)?

Yes. Because shared Memory is essentially RAM, if a GPU has to resort to using the System’s RAM for its computations, it’ll take a performance hit.

Dedicated VRAM Modules are close to the GPU’s core chip and can be accessed much quicker than going through the PCIe-Bus through the Motherboard to find something in System RAM.

What is Shared GPU Memory Used For?

Shared GPU memory is borrowed from the total amount of available RAM and is used when the system runs out of dedicated GPU memory.

The OS taps into your RAM because it’s the next best thing performance-wise; RAM is a lot faster than any SSD on the market, and that’ll surely remain the case for the foreseeable future.

How to Change Shared GPU Memory Value in Windows?

Changing the amount of your system’s shared GPU memory is not as straightforward as one might think.

You’ll need to tinker around with your BIOS settings if you want to adjust or turn off the shared GPU memory feature.

It’s not recommended, though, as making these kinds of changes isn’t going to improve the performance of your system in most workloads.

What’s the Difference Between Total, Dedicated, and Shared GPU Memory?

Dedicated video memory is the amount of physical memory on your GPU.

Shared GPU memory is the amount of virtual memory that will be used in case dedicated video memory runs out. This typically amounts to 50% of available RAM.

When these two pools of memory are combined, you get the total amount.

Over To You

How much VRAM does your graphics card have, and did you ever tinker with any of these settings?

Let us know in the comments section down below or, alternatively, on our forum!

![What Is Shared GPU Memory? [Everything You Need to Know] What Is Shared GPU Memory? [Everything You Need to Know]](https://www.cgdirector.com/wp-content/uploads/media/2022/06/What-is-Shared-GPU-Memory-Everything-You-Need-to-Know-Twitter-1200x675.jpg)

![Guide to Undervolting your GPU [Step by Step] Guide to Undervolting your GPU [Step by Step]](https://www.cgdirector.com/wp-content/uploads/media/2024/04/Guide-to-Undervolting-your-GPU-Twitter-594x335.jpg)

![Are Intel ARC GPUs Any Good? [2024 Update] Are Intel ARC GPUs Any Good? [2024 Update]](https://www.cgdirector.com/wp-content/uploads/media/2024/02/Are-Intel-ARC-GPUs-Any-Good-Twitter-594x335.jpg)

![Graphics Card (GPU) Not Detected [How to Fix] Graphics Card (GPU) Not Detected [How to Fix]](https://www.cgdirector.com/wp-content/uploads/media/2024/01/Graphics-Card-GPU-Not-Detected-CGDIRECTOR-Twitter-594x335.jpg)

12 Comments

16 October, 2023

hello i have delll laptop with intel intel hd graphics 620 this dedicated memory is 64mb and shared is 2gb the total system ram is 4 gb so i have increase system ram 4gb to 8 gb the shared gpu ram is increase please tell me im so confused

7 November, 2023

Hey Kaim, I’m not 100% sure what you’re asking. Dedicated gpu memory is the physical VRAM that the GPU can use, and the shared memory is the memory it’ll take from your system RAM. IOf you increase your system RAM, it may very well be that your GPU will take more of it, though you should be able to set a fixed amount in your OS.

6 July, 2023

Hello

I have an 8gig ram system but my shared system memory is about 2gig and I don’t know where the problem is from cause I believe its meant to be about half of the ram. And yes, the system recognizes the full ram as 8gig

20 June, 2023

I currently have a laptop with a GTX 3050ti and 4gb of dedicated memory and 32gb of system memory. I find it to be a great budget gaming option since i’m not picky about settings and it can run most games at 1080p medium or high settings.

I’m just wondering. Do I see any real benefit for having the extra system ram to spare? I play a lot of games like fallout 4 with tons of graphic and texture mods. Even vanilla never dipped below 60fps for me. But i’m concerned about newer titles like Starfield and whether my 4gb 3050ti might be fine with the shared memory I have.