TABLE OF CONTENTS

Machine Learning (ML).

Quite possibly the hottest buzzword of the 21st century that actually has the power to bring us into the futuristic world we all dream of.

Having evolved from rudimentary pattern recognition systems, ML allows computers to learn the way a human would, through repeat iterations.

Machine Learning Explained by My Great Learning

By learning, evolving, failing, and repeating the process over and over again with the gentle guidance of programmers, ML can learn to do processes that could only be done by humans previously.

Whether you are developing recommendation systems to figure out what roast of coffee your customers might like or creating the revolutionary algorithms that could one-day power self-driving cars, you need to have a well-designed PC that’s up to the task.

To do that, however, we need to clear some things up first.

Different ML projects can require different hardware, and we can’t list all potential ML projects that you might want to do.

So this guide will mostly cover the basics of what a great ML workstation needs in general.

But we shall also cover the basics of how different machine libraries might use hardware differently and we’ll discuss how different ML techniques (supervised and unsupervised) might impact our PC build.

You can use that knowledge to customize our suggested builds, if need be, to create something that matches your exact needs.

So, with that out of the way, let’s jump on in.

Understanding Machine Learning Libraries and Use Cases

Machine Learning Libraries

ML has been used to enhance our lives in so many ways.

Image recognition (Face ID), speech recognition (Google), recommendation systems (YouTube, Netflix, TikTok), fraud detection (Spam filters), virtual assistants (Siri), automatic language translation (Google Translate), medical diagnosis (cancer use case), and so much more rely on it to function.

And all of these systems make extensive use of things called ML libraries.

Different ML libraries like PyTorch, Keras, scikit-learn, OpenCV, NumPy, etc., all have different objectives and use cases, because of this, the hardware requirements for each library differ from each other.

PyTorch, for example, is dedicated to computer vision and natural language processing.

Image Credit: PyTorch

And libraries like Keras and Tensorflow focus on artificial and deep neural networks.

Scikit-learn focuses on predictive data analysis, and OpenCV is used for real-time computer vision applications, and so on.

Because of the different use cases of all these libraries, it makes it pretty tricky to pick PC parts, but one of the most important components when it comes to ML workloads regardless of what you might be doing is the Graphics Card (GPU).

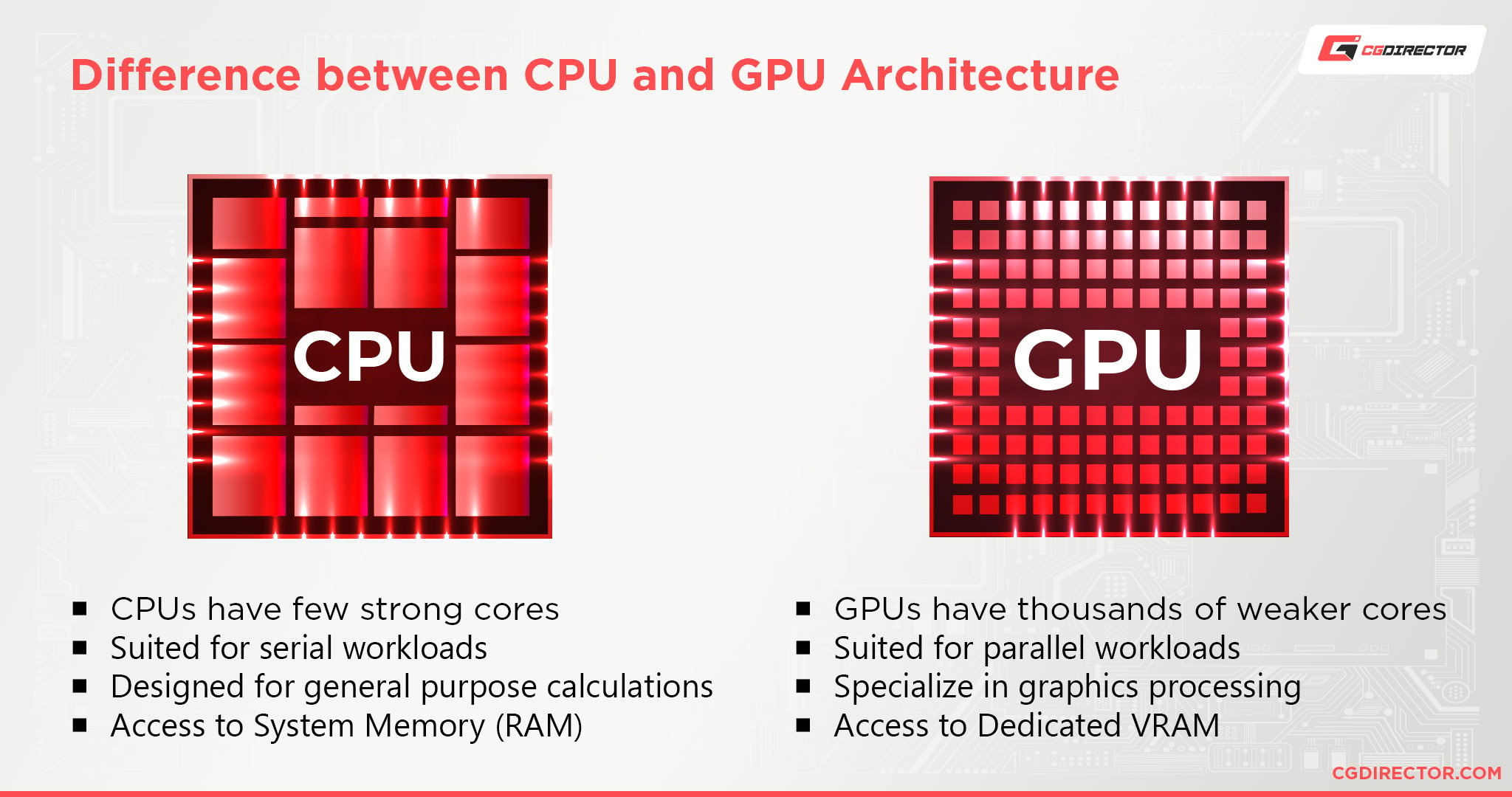

Most ML libraries have a strong preference for the parallel computation (doing a lot of things at once) capabilities of the GPU over tensor operations on central processing units (CPUs)

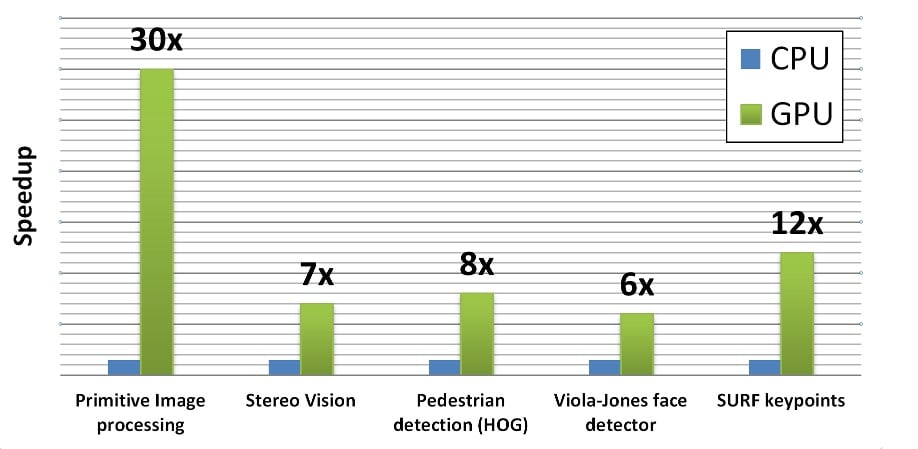

Certain ML libraries like OpenCV can use the CUDA capabilities of Nvidia GPUs to perform certain operations at 12 to 30 times the speed of a CPU.

Source: LearnOpenCV

This isn’t to say that all you need is a good GPU, however.

Good CPUs are incredibly important for certain ML libraries as well, but as we can see, when it comes to machine learning, GPU is king—well, tensor processing units (TPUs) are actually king, but we’ll get to that.

Machine Learning Use Cases

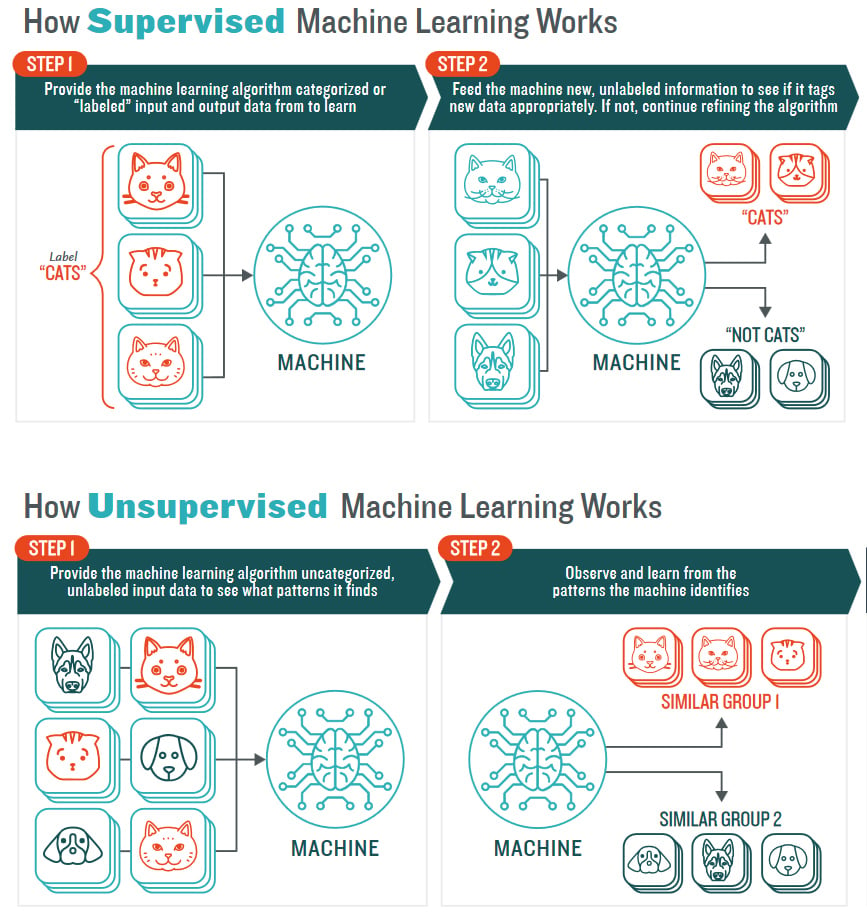

There are two main techniques used in Machine Learning, and they’re called supervised and unsupervised learning.

Source: Lotus Quality Assurance

With supervised learning, the algorithm is trained using data that are well labeled and described by humans to help the algorithm learn.

Think training an AI to identify key points on a large collection of invoices.

You’d first help the AI to figure out where all the important fields are.

It then uses this information to predict and figure out by itself where all the important fields on future invoices might be.

And on the opposite end of the spectrum, unsupervised learning is allowing the algorithm to discover the characteristics of the data and patterns on its lonesome with no supervision.

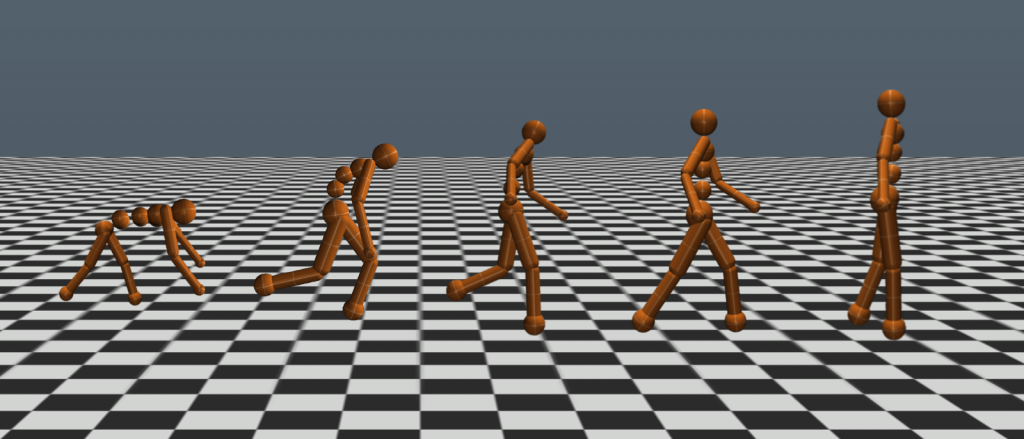

Think of a 3D program where human characters are created.

These characters don’t have any inherent locomotion logic to them, but they can contract and relax muscles the way an actual human might.

You then create an AI whose purpose is to make it so that the human characters walk from point A to point B by using their muscles.

Image Credit: Uber Engineering – Kenneth O. Stanley and Jeff Clune

You then run the simulation and see your creations fall down face first and just generally do anything but walk.

But, eventually, after enough generations and beneficial mutations have happened, your characters will be able to slowly walk on their own.

Having learned it purely through trial and error.

Now, when it comes to the computational requirements of the two techniques, there aren’t any major differences in either.

However, certain ML projects requiring deep learning techniques (unsupervised learning), working on images and videos (supervised or unsupervised), etc., make the processing requirements increase due to the larger number of matrix calculations required.

So let’s see what we need to handle anything from small-time recommendation systems to large convolutional networks.

Best PC Hardware for Machine Learning

Now we’ll get to the bits you’re actually here for. Building a PC for Machine Learning.

Disclaimer: We’re focusing on entry-level general Machine Learning tasks for individuals starting out in the field. If you’re representing an organisation or are looking to purchase hardware north of 5k$, we recommend you find a partner in the field that already successfully deployed and uses such pro-level hardware.

Source: AMD

We’ll outline budget, value, and performance hardware picks for all different components so that you can create a PC regardless of what your budget might be.

Best CPU for Machine Learning

Even though CPU performance might not be as important for Machine Learning as GPU performance, certain libraries still work better or only work on CPUs.

Because of that, and not wanting to bottleneck your PC, we need to make sure that we pick a good CPU to go with the rest of the system as well.

There’s also the fact that certain ML models working with things such as time-series data benefit a lot from having a good CPU.

CPU Recommendations

- Performance – AMD Ryzen Threadripper 3960X: With 24 cores and 48 threads, this Threadripper comes with improved energy efficiency and exceptional cooling and computation.

- Value – Intel Core i7-12700K: At a combined 12 cores and 20 threads, you get fast work performance and computation speed.

- Budget – Intel Core i5-12600K: With a combined 10 cores and 16 threads, you get a great CPU at an amazing price.

Best GPUs for Machine Learning

Due to the intensive nature of the model training phase, the use of GPU is crucial to train your model faster by running all operations at the same time instead of sequentially.

Depending on the library used, CUDA or OpenCL can greatly affect GPU usage.

Nvidia graphics cards are usually the best choice for ML libraries with CUDA support, and AMD cards are better for OpenCL or non-CUDA supporting libraries.

Since most libraries like PyTorch, Tensorflow, OpenCV, and Keras favor Nvidia a lot more than AMD, we will only be covering Nvidia offerings.

Though if you really want to get an AMD GPU or any other GPU, we have handy dandy AMD and Nvidia GPU performance breakdowns available.

GPU Recommendations

- Performance – GeForce RTX 3090 super: This absolute beast of a GPU is powered by Nvidia’s Ampere (2nd gen) architecture and comes with high-end encoding and computing performance and 24GB of GDDR6X RAM. It will chew through anything you throw at it.

- Value – GeForce RTX 3060: Based on the same architecture as the RTX 3090, the RTX 3060 is an excellent card with RT and Tensor cores that’ll give great training performance and efficiency.

- Budget – GeForce GTX 1060: The GTX 1060 is still a respectable GPU when it comes to most tasks, however, I wouldn’t expect much when it comes to large parameter deep learning models or neural networks.

All things considered, I would recommend that you save up and buy an RTX 3060 instead. Of course, all of this is if you’re using your own Workstation/PC for your work. Outsourcing to Network Clusters for speeding up supported libraries onto multiple PCs and GPUs is a possibility – with the .

What are TPUs?

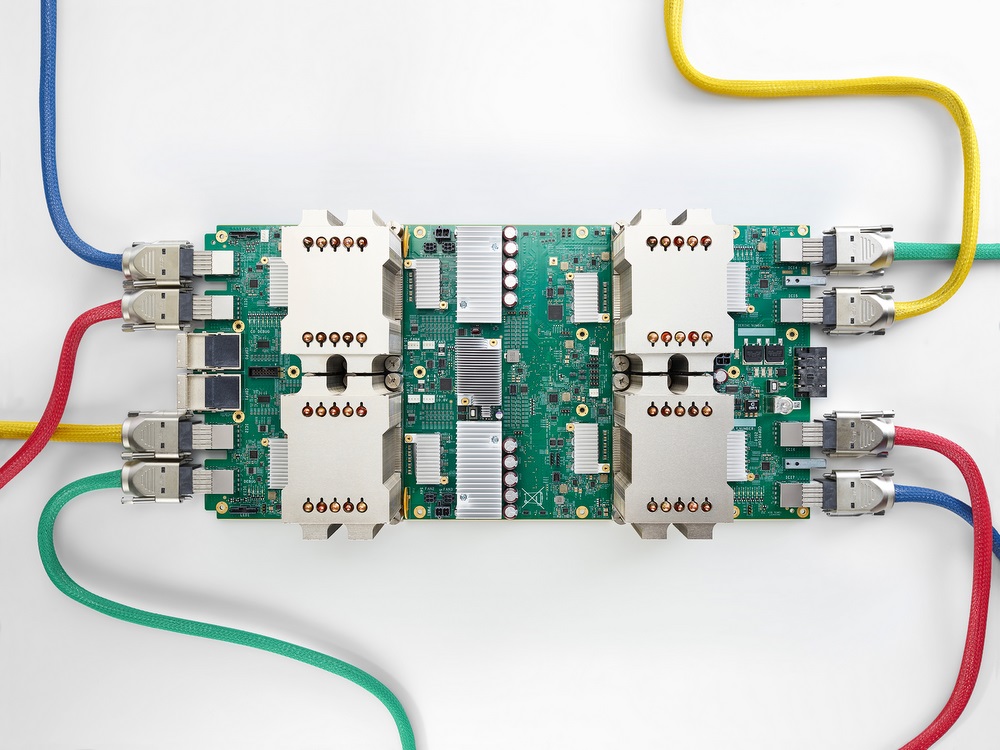

I mentioned TPUs previously, so let’s figure out what they are.

If GPUs are dedicated to calculating graphics equations, TPUs (Tensor Processing Units) can be thought of as hardware dedicated to optimizing machine learning equations.

Google is credited with the creation of TPUs back in 2016.

These TPUs are AI-accelerated Application-Specific-Integrated-Circuits (ASIC) built for machine learning applications.

However, TPUs are specialized hardware that you can’t really get as an individual.

Although you can rent TPUs via Google Cloud.

Source: Google

So, because of this, TPUs aren’t really relevant to our suggestions here.

Best RAM for Machine Learning

ML algorithms perform a lot of processes at the same time, and these operations rely heavily on the CPU and GPU, so they are the things that most people think about when it comes to important components.

But good RAM is crucial as well.

RAM caching, for example, is very important to the whole process.

It limits data losses and speeds up the iterations between the algorithm and the data.

The RAM size matters as well. If you are on a budget, 16GB of RAM would generally be sufficient.

However, I would recommend 32GBs or above as it’s quite easy to chew through 16GBs of RAM these days, and the price difference isn’t all that big.

RAM Recommendations

- Performance – Corsair Vengeance LPX 32GB (2 X 16GB) 3600MHz DDR4 C18

- Value – Crucial Ballistix 16GB (2 x 8GB) 3000MHz DDR4 CL15

Best Storage for Machine Learning

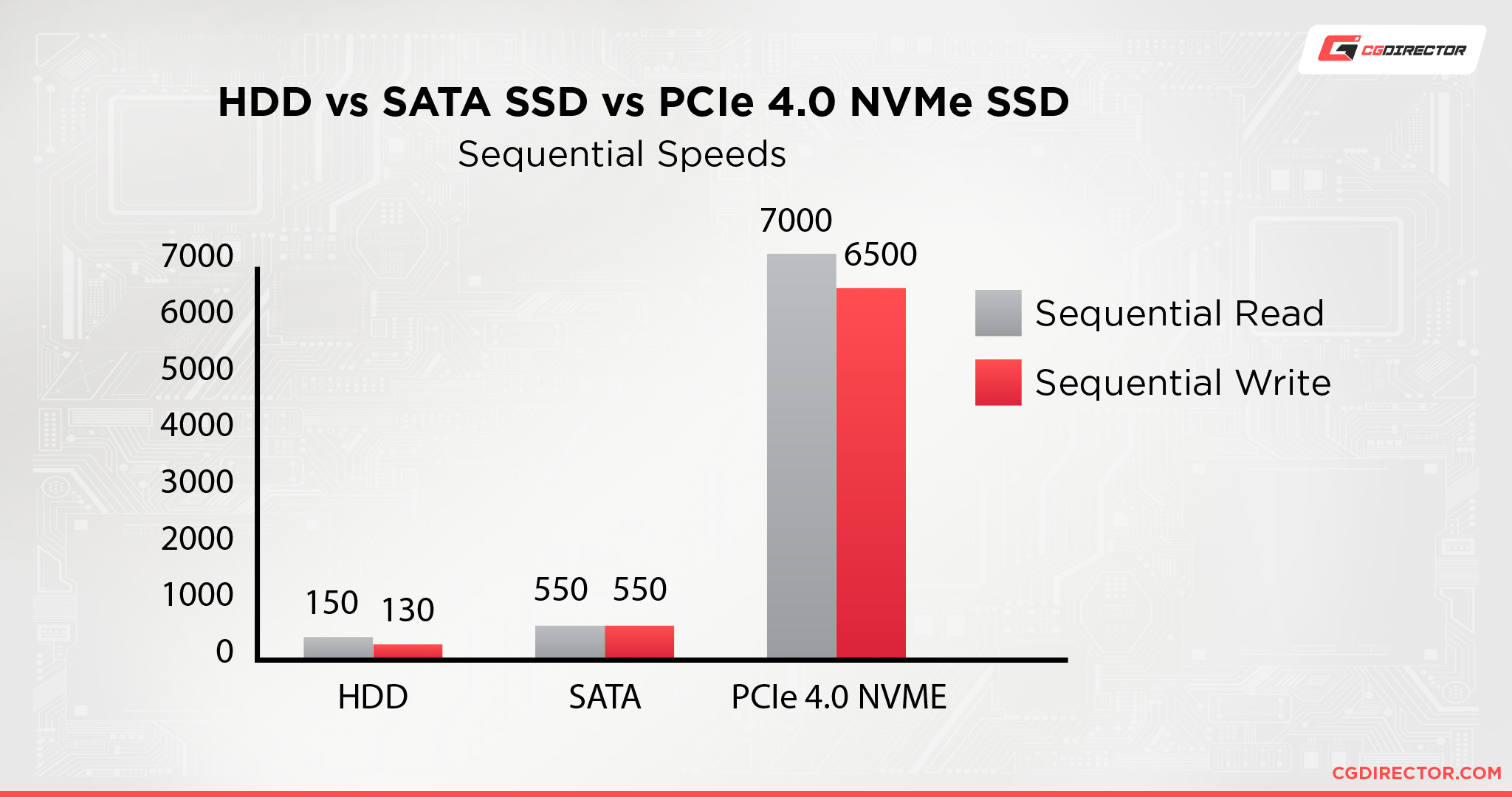

Data processing is an integral part of the ML process.

During training, some of your datasets will be in your hard drive and will then need to be accessed.

So sufficiently fast and large enough to accommodate this with any major slowdowns.

The file types used in the data sets matter as well.

For smaller bit data, an HDD (Hard Disk Drive) would be sufficient.

However, for large 32-bit data sets, an SSD/NVMe drive would be required, as the HDD speeds would be too slow to keep up with the data output.

Considering how cheap SSDs have gotten over the years, if you want cheap, bulk storage at reasonable enough speeds, an SSD is the obvious option.

NVMe drives are good as well—they’re better than SSDs in every way except for the price—but the benefits they offer aren’t absolutely needed for our use cases, so unless you want the best of the best, SSDs are generally good enough.

Though these days, NVMes are only marginally more expensive than SSDs, so I’d recommend checking out both to see which one fits your budget better.

If the difference between a 1TB NVMe drive and a 1TB SSD is a couple of bucks, just get the NVMe drive instead.

I would also recommend getting at least two drives and using one as a backup.

You don’t worry about data loss until it actually happens and, trust me, it’s better to be prepared than have a crisis at 3 in the morning.

Still, if you’re on a really strict budget, separate drives aren’t absolutely essential.

Perhaps you could use cloud storage as a backup as well.

But at a higher budget, two to four SSD/NVMe drives are good to have.

Storage Recommendations

- Performance – Samsung 980 Pro 1TB NVMe Gen 4 SSD

- Value – Samsung 980 500GB NVMe Gen 3 SSD

- Budget – Crucial MX500 250GB SSDs

Best Motherboard for Machine Learning

Picking a motherboard on its lonesome is hard cause you never really pick a motherboard first.

You pick what CPU you want and then you pick the motherboard around that.

But, there are still certain aspects that you should look out for regardless of the kind of CPU you go with.

Things like PCIe slots, RAM slots, and I/O (Input/Output) options.

Motherboard Recommendations

- Performance – ASRock TRX40 Creator – Best motherboard for AMD Threadrippers.

Image Credit: GIGABYTE

- Performance – Gigabyte Z690 AORUS MASTER – Best motherboard for Intel Alder Lake CPUs.

- Value – Gigabyte Z690 UD – Best motherboard for budget Intel Alder Lake CPUs.

Best Power Supplies for Machine Learning

PSUs (Power Supply Units) are pretty easy to pick compared to the rest of a PC.

Just calculate the total Wattage requirements of your PC and then pick an—at a minimum—80+ gold PSU that can deliver 100 – 250Ws more than your PC’s total Wattage requirement—to make sure that any spikes in power don’t turn the PC off and to future proof.

Also, be sure to get a fully modular PSU.

A semi-modular or non-modular PSU might be a little cheaper, but they’re a pain to work with.

So spend a bit more and get a good modular PSU.

You rarely change out good PSUs between PC builds, so getting something well made will last you years.

PSU Recommendations

- 750W: Seasonic PRIME GX-750

- 850W: Seasonic PRIME GX-850

- 1000W: Seasonic PRIME GX-1000

Best Case for Machine Learning

Cases won’t really affect your performance. And any case with half-decent airflow will work just fine.

So this bit is completely up to you.

Just make sure that it has enough space for everything you want to put in it and that it has good ventilation.

Case Recommendation

- Mid-Tower: NZXT H510

- Mid-Tower: Corsair iCUE 4000X

- Full Tower: Thermaltake View 51

- Big Tower: Fractal Design Define XL R2

Best PC Builds at Different Price Points

Best Performance Computer for Machine Learning – $4000

Best Value Computer for Machine Learning – $2500

Best Budget Computer for Machine Learning – $1500

Why no pro-level hardware?

We’re focusing on personal Machine Learning Workstation for individuals in this article with moderate budgets.

There’s also the possibility of going with professional-level Hardware, GPUs and CPUs. Threadripper Pro CPUs for example, Intel Xeons, or AMD Radeon Pro and Nvidia Tesla and Titan GPUs. While such targeted hardware is a lot better for specific Machine learning tasks, they are also unproportionally more expensive and might very well exceed your budget if you’re just starting out with tinkering in Machine Learning.

If you’re looking at spending north of 5k$ for Machine learning Hardware, either for yourself or for your organization, our recommendation is to find a partner in the field that already successfully deployed and uses such pro-level hardware.

In Summary

Hopefully, that gave you a good idea of what you need to keep in mind when picking out parts for a machine learning PC.

Considering how many different variables there are to account for, it’s not easy to say just get this or that.

The main thing is that you need to figure out what’s most important to you/your work first of all.

You’ll have a much easier time when you know the specifics of what you’re looking for as opposed to a PC for all machine learning, which is a bit broad.

Over to You

Did that answer all your questions about what it takes to build a PC for machine learning? Got any other unanswered questions? Feel free to ask us in the comments or our forum!

![Can You Run Two Different GPUs in One PC? [Mixing NVIDIA and AMD GPUs] Can You Run Two Different GPUs in One PC? [Mixing NVIDIA and AMD GPUs]](https://www.cgdirector.com/wp-content/uploads/media/2023/03/Can-You-Run-Two-Different-GPUs-in-One-PC-Mixing-NVIDIA-and-AMD-GPU-Twitter-594x335.jpg)

5 Comments

16 January, 2024

Thank you for the great post, I was looking for it for a long time.

17 January, 2024

Hey Kevin, Glad we could help! Let me know of any questions you might have 🙂

Alex

29 November, 2022

Can I swap the “budget” rig Mother board for this one if i want WiFi? Is everything else still compatible?

ASUS ROG Strix B660-A Gaming WiFi D4 LGA 1700(Intel 12th Gen) ATX Gaming Motherboard(PCIe 5.0,12+1 Power Stages,WiFi 6, 2.5 Gb LAN, 3xM.2 Slots,PCIe 4.0 NVMe® SSD Support, USB 3.2 Gen 2×2 Type-C)

30 November, 2022

Yes, that’s possible! The motherboard is still compatible with the listed CPU. You swapped the CPU with the i5 12600? That’s fine as well.

Cheers,

Alex

29 November, 2022

Ordered everything in the “budget” recommendations except the CPU. I picked the exact same one but without the integrated graphics. Is everything still compatible? I am new at this… Is this build going to be able to have wifi and bluetooth?